Undetectable model stealing with covert learning

Ari Karchmer — October 2023

Based on my papers [CK21] and [Kar23], presented at TCC '21 and SaTML '23.

TLDR

Can machine learning models be protected from theft when served through a query interface? Observational Model Extraction Defenses (OMEDs) try to detect adversarial clients by monitoring their query patterns. We show that OMEDs are fundamentally limited: using Covert Learning—a cryptographic learning paradigm where queries are indistinguishable from random—an attacker can extract a model while appearing completely benign to any efficient OMED. The upshot is that no polynomial-time observational defense can provably prevent model extraction for concept classes like decision trees, assuming standard cryptographic hardness (LPN). This result is theoretical in nature, but the possibilities are not.

INTRODUCTION

Consider LLM chatbots like OpenAI's ChatGPT or Anthropic's Claude. Could a prolonged interaction with ChatGPT be sufficient to "clone" it? In other words, could someone engage with Claude extensively enough to develop "Claudio," a reasonable impersonation of Claude?

In a model extraction attack, an adversary maliciously probes an interface to a machine learning (ML) model in an attempt to extract the ML model itself. Model extraction was first introduced as a risk by Tramer et al. [TZJ+16] at USENIX '16.

Besides impersonating chatbots, having an ML model stolen poses significant risks in any machine learning as a service (MLaaS) scenario. Two main concerns stand out:

- Intellectual Property Concerns. Often, an ML model is considered confidential and represents valuable intellectual property that should remain proprietary. In extreme cases, such as modern Large Language Models (LLMs), the model in the wrong hands could lead to significant problems.

- Security and Privacy Concerns. Preventing model extraction enhances security and privacy. For instance, adversarial example attacks become easier once the model is known rather than being a black-box (see e.g., [CJM20] and references therein). The same holds true for model inversion attacks, where adversaries obtain information about the data used to train the model.

DEFENDING AGAINST MODEL EXTRACTION

Clearly, preventing model extraction is important. Understanding how to defend against model extraction has been extensively studied in academic literature (see e.g., [KMAM18], [TZJ+16], [CCG+20], [JSMA19], [PGKS21]).

Most model extraction defenses (MEDs) in the literature fall into two categories:

- Noisy Responses. The first type aims to limit the amount of information revealed by each client query. One intuitive proposal is to add independent noise, meaning the model may respond incorrectly with some probability.

- Observational Model Extraction Defenses (OMEDs). The second type aims to identify "benign" clients, who seek to obtain predictions without attempting to extract the model, and "adverse" clients, which aim to extract the model. To differentiate between the two, the defense observes client behavior before making a decision. This approach does not alter the ML model itself.

In this post, we focus on OMEDs rather than noisy response defenses, since the latter inherently sacrifice predictive accuracy—making them unsuitable for critical ML applications such as autonomous driving, medical diagnosis, or malware detection.

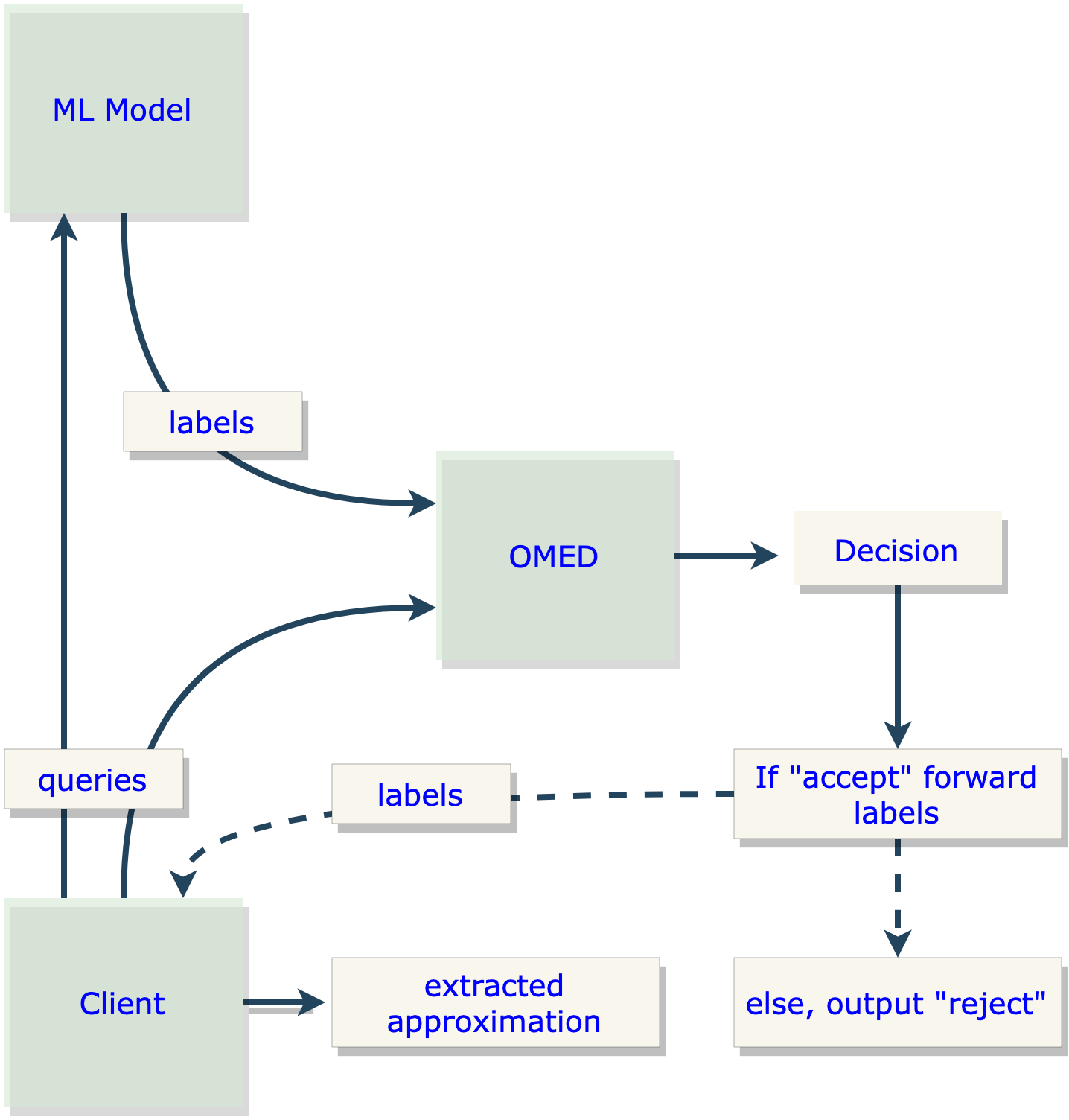

OMEDs in detail

OMEDs preserve accuracy entirely since they do not modify the underlying model. A common implementation involves a "monitor" that analyzes a batch of client requests to compute a statistic measuring the likelihood of adversarial behavior. The goal is to reject a client's requests if their behavior surpasses a certain threshold. Examples can be found in [KMAM18], [JSMA19], and [PGKS21].

Essentially, observational defenses aim to control the distribution of client queries. They classify clients that deviate from acceptable distributions as adverse and subsequently restrict their access to the model.

Any client interacting with the ML model, whether adverse or benign, must query in accordance with an acceptable distribution. If they do not, the OMED will refuse service. But how should we determine the appropriate acceptable distributions? To date, these distributions have been chosen heuristically. For instance, in [JSMA19], an acceptable distribution is one where the distribution of Hamming distances between queries is normally distributed.

No formal definitions of security against model extraction have been proposed. Moreover, there has been limited formal work to understand the theoretical foundations of the observational defenses suggested in the literature. This gap was highlighted by Vinod Vaikuntanathan in his talk at the Privacy Preserving Machine Learning workshop at CRYPTO '21. As a result, a "cat-and-mouse" dynamic has developed between attacks and defenses, with no satisfying guarantees being established for either side.

CAN OMEDS PROVIDE PROVABLE SECURITY?

In [Kar23], we explore whether OMEDs can provide satisfying provable guarantees of security. Inspired by modern Cryptography, where the security of protocols is mathematically guaranteed by the infeasibility of certain hard computational problems, we sought to apply similar principles to model extraction defenses.

But how do we define security against model extraction? An initial approach might consider leveraging zero-knowledge style security, where a client learns nothing about the ML model beyond what they could have learned prior to interacting with it. While appealing, this is overly restrictive—at a minimum, the client should be able to learn some information "in good faith" from interacting with the model.

A revised security goal could instead ensure that a client learns nothing beyond what they could learn from a set of random queries to the model. This strikes a balance between the overly restrictive zero-knowledge guarantee and allowing complete query access.

The implicit security model of OMEDs

One key insight of [Kar23] is that the notion of security implicitly underlying existing OMEDs aligns with this revised goal. While OMED literature typically cites the objective of detecting model extraction attempts, the downstream effect is confining client queries to specific, acceptable distributions by enforcing benign behavior. This enforcement assumes that any information a benign client can deduce from queries sampled from these distributions is considered secure.

Consider OMEDs for ML classifiers. The theory of statistical learning, particularly the VC dimension framework, suggests that a number of samples proportional to the VC dimension suffices for PAC learning. This seems to undermine OMED-based protection: an adversary with unbounded computational power could simply query according to an acceptable distribution sufficiently many times and apply a PAC-learning algorithm. The outcome would be a function that closely approximates the underlying ML model with high confidence.

What about computationally bounded adversaries?

However, this attack model does not account for the complexity of the implied extraction attack. Depending on the model's complexity, such an attack may require superpolynomial query and computational resources. For many important classifier families (e.g., boolean decision trees), no polynomial-time PAC-learning algorithms are known, despite extensive efforts from the learning theory community. This remains true even when queries are restricted to uniformly distributed examples and the decision tree is "typical" rather than worst-case hard.

This provides evidence that the security model implicitly considered by observational defenses may effectively prevent unwanted model extraction by computationally bounded adversaries. The OMED constrains the adversary to interact with the query interface in a manner that mimics an acceptable distribution, from which learning the underlying model is computationally challenging.

Security against model extraction by computationally bounded adversaries can thus be provable in a complexity-theoretic sense, akin to Cryptography. One could aim to establish a reduction from polynomial-time PAC-learning to polynomial-time model extraction in the presence of observational defenses: "any efficient algorithm capable of learning an approximation of a proprietary ML model while constrained by an observational defense would yield a PAC-learning algorithm, which is currently beyond all known techniques."

COVERT LEARNING

Having established that OMEDs could potentially provide provable security against computationally bounded adversaries, the next natural question is: can OMEDs be efficiently implemented to withstand these adversaries? To answer this, we need to introduce the concept of Covert Learning [CK21]—a learning algorithm that lies at the center of the impossibility results of [Kar23].

The Covert Learning model is a variant of Valiant's PAC-learning model, situated in the agnostic learning with queries setting. In agnostic learning with queries, a learning algorithm has access to an oracle that labels points in the domain of the target concept. These points can be chosen arbitrarily by the learning algorithm. In the "agnostic" setting, the goal is for the learning algorithm to identify a model (within some predefined "touchstone" class) that approximates an arbitrary target concept approximately as well as the best possible model in the touchstone class.

The essence of the Covert Learning model is to formalize a new type of privacy in learning theory. Specifically, Covert Learning algorithms provide the guarantee that the queries leak very little information about the concept, with respect to an adversary that can observe the queries. The Covert Learning algorithm gains privacy for what it is learning.

In other words, Covert Learning allows one to learn a concept (using knowledge of some internal randomness only known to itself),[1] while the adversary remains nearly completely "in the dark" about the concept, even given a view of the entire transcript of membership queries and oracle responses. Crucially, the adversary is not privy to the secret randomness of the learning algorithm.

An example to build intuition: imagine being in a course with one teacher and many classmates, where you are graded "on a curve." To finish ranked first, you need to learn the day's concept by asking the teacher yes-or-no questions. The catch is that every classmate observes your questions and the teacher's answers. Can you design a set of questions that allows you to learn the concept while preventing your classmates from learning it similarly? Covert Learning algorithms provide a solution under natural assumptions.

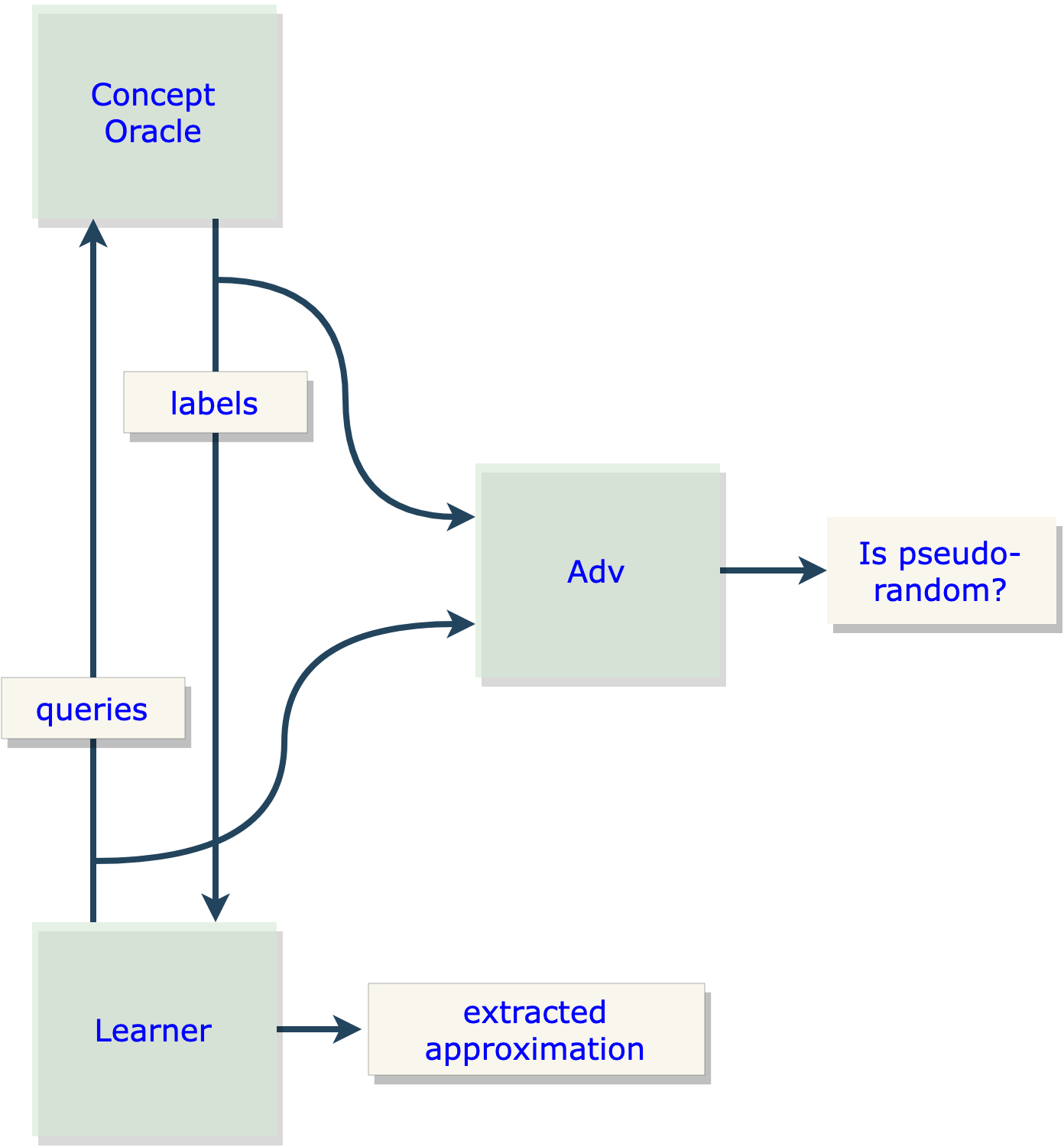

Mathematically, Covert Learning formalizes this using the simulation-based security paradigm from Cryptography. Roughly speaking, any membership query learning algorithm is a Covert Learning algorithm if it has an accompanying simulator (a polynomial-time algorithm) which, when given access only to random examples, emulates the distribution over the membership queries in a computationally indistinguishable way. The simulator can produce a "believable" transcript of the interaction without query access to the underlying concept.

For a concept class where access only to uniformly random examples makes learning computationally hard, this proves that the learning transcript reveals very little information to a computationally bounded adversary:

- The simulator shows that the query distribution is hard to distinguish from one derivable from uniformly random examples.

- If there were an adversary that could learn information about the concept from those queries, that adversary could act as a distinguisher.

These two points together lead to a contradiction. Hence, Covert Learning algorithms are reserved for concept classes not (known to be) efficiently learnable in the original PAC-model with respect to a certain "hard example distribution"—such as decision trees and low-depth neural networks.

A special case worth highlighting is natural Covert Learning. Rather than requiring a simulator for the query interaction, natural Covert Learning requires that the distribution over the membership queries is computationally indistinguishable from a single pre-defined "hard example distribution" (e.g., the uniform distribution).

CONNECTING COVERT LEARNING TO MODEL EXTRACTION

The adversary in natural Covert Learning—a distinguisher that attempts to distinguish the membership queries from random examples—is essentially the same entity as the OMED in a model extraction attack.

See the resemblance between Figures 1 and 2.

This is the key insight that allows us to construct a provable and efficient attack on any OMED.

At first glance, this might seem confusing because normally the adversary in model extraction is the malicious client trying to reverse-engineer the model. However, consider that the job of the OMED is to distinguish benign clients from adverse clients. Thus, from the perspective of the actual adversary (the model extractor), the OMED is adversarial and has essentially the same job as the natural Covert Learning adversary: to detect some property about the client's distribution of queries. This becomes apparent when comparing Figures 1 and 2.

By exploiting this connection, we can demonstrate that natural Covert Learning algorithms form a recipe for an attack that can fool the OMED in the same way they fool the Covert Learning adversary. The learning guarantee of natural Covert Learning lets us argue that the client can still extract the underlying model. Hence, a single Covert Learning attack can achieve extraction while "fooling" any OMED—any OMED will output "accept" with high probability even though an extraction attack is being performed.

THE RESULTS OF [KAR23]

In [Kar23], I provide a negative answer to the question of whether OMEDs can be efficiently implemented against computationally bounded adversaries, through the following contributions:

- I formally define an abstraction of OMEDs by unifying the observational defense techniques proposed in the literature.

- I introduce the concepts of complete and sound OMEDs. Completeness guarantees that any benign client, defined as one interacting according to an acceptable distribution, is accepted with high probability. Soundness ensures that any adverse client, one not querying according to an acceptable distribution, is rejected with very high probability.

- I present a method for generating provably effective and efficient attacks on the abstract defense, assuming that the defense operates in polynomial time and satisfies a basic form of provable completeness. This is achieved by connecting to the Covert Learning model from [CK21]. This connection implies an attack on any decision tree model protected by any OMED, under the standard cryptographic assumption known as Learning Parity with Noise (LPN).

- This attack represents the first provable and efficient attack on any large class of MEDs for a broad class of ML models!

- Finally, I extend an algorithm from [CK21] to operate in a more general setting. This extension produces an even more potent attack on efficient OMEDs, capable of handling OMEDs that satisfy a wider variety of completeness conditions.

WHY COVERT LEARNING IS THE RIGHT TOOL

To wrap up, it is worth emphasizing why Covert Learning algorithms are at the crux of model extraction attacks in the presence of OMEDs.

All models are at risk of extraction due to their exposure via some interface. The VC dimension argument shows that, given enough random examples and sufficient computational power, an adversary has a PAC-learning algorithm that would work for extraction. Since this attack only uses random examples, the model extraction adversary can choose to make queries according to a distribution that is "accepted" by the OMED.

However, the VC dimension argument generally relies on unbounded computational power, and only very simple concept classes are known to be PAC-learnable in polynomial time from random examples (e.g., linear models). The natural question is whether there exist effective defenses against polynomial-time adversaries, as is customary in modern Cryptography. Many important models (e.g., decision trees, low-depth neural networks) are not known to be PAC-learnable in polynomial time from random examples. On the other hand, a class like decision trees is learnable in polynomial time when using carefully synthesized queries. But classic membership query algorithms like the Kushilevitz-Mansour algorithm [KM93] make queries that can be tested and identified as problematic by the OMED.

Covert Learning algorithms bridge the gap between these two settings. They constitute attacks that are benign-looking but actually adverse: polynomial-time membership query learning algorithms that synthesize queries in a special way that is provably hard to distinguish from the classic PAC-learning with random examples setting. This means that Covert Learning attacks are both undetectable by an efficient OMED and run in polynomial time. By contrast, the VC dimension argument is undetectable but not efficient, while the membership query attack of [KM93] is efficient but is detectable by the OMED.

[1] Note that the internal randomness is not even shared with the concept oracle. To use an analogy from Cryptography, Covert Learning is "public-key" in nature, as opposed to "symmetric-key," which might rely on shared randomness between the learner and the concept oracle.

REFERENCES

- [BCK+22] Eric Binnendyk, Marco Carmosino, Antonina Kolokolova, Ramyaa Ramyaa, and Manuel Sabin. Learning with distributional inverters. In International Conference on Algorithmic Learning Theory, pages 90–106. PMLR, 2022.

- [BFKL93] Avrim Blum, Merrick Furst, Michael Kearns, and Richard J Lipton. Cryptographic primitives based on hard learning problems. In Annual International Cryptology Conference, pages 278–291. Springer, 1993.

- [CCG+20] Varun Chandrasekaran, Kamalika Chaudhuri, Irene Giacomelli, Somesh Jha, and Songbai Yan. Exploring connections between active learning and model extraction. In Proceedings of the 29th USENIX Security Symposium (USENIX Security 20), pages 1309–1326, 2020.

- [CJM20] Nicholas Carlini, Matthew Jagielski, and Ilya Mironov. Cryptanalytic extraction of neural network models. arXiv preprint arXiv:2003.04884, 2020.

- [CK21] Ran Canetti and Ari Karchmer. Covert learning: How to learn with an untrusted intermediary. In Theory of Cryptography Conference, pages 1–31. Springer, 2021.

- [CLP13] Kai-Min Chung, Edward Lui, and Rafael Pass. Can theories be tested? A cryptographic treatment of forecast testing. In Proceedings of the 4th Conference on Innovations in Theoretical Computer Science, pages 47–56, 2013.

- [DKLP22] Adam Dziedzic, Muhammad Ahmad Kaleem, Yu Shen Lu, and Nicolas Papernot. Increasing the cost of model extraction with calibrated proof of work. arXiv preprint arXiv:2201.09243, 2022.

- [GBDL+16] Ran Gilad-Bachrach, Nathan Dowlin, Kim Laine, Kristin Lauter, Michael Naehrig, and John Wernsing. Cryptonets: Applying neural networks to encrypted data with high throughput and accuracy. In International Conference on Machine Learning, pages 201–210. PMLR, 2016.

- [GL89] Oded Goldreich and Leonid A Levin. A hard-core predicate for all one-way functions. In Proceedings of the Twenty-First Annual ACM Symposium on Theory of Computing, pages 25–32, 1989.

- [GMR89] Shafi Goldwasser, Silvio Micali, and Charles Rackoff. The knowledge complexity of interactive proof systems. SIAM Journal on Computing, 18(1):186–208, 1989.

- [GPV08] Craig Gentry, Chris Peikert, and Vinod Vaikuntanathan. Trapdoors for hard lattices and new cryptographic constructions. In Proceedings of the 40th Annual ACM Symposium on Theory of Computing, pages 197–206, 2008.

- [JCB+20] Matthew Jagielski, Nicholas Carlini, David Berthelot, Alex Kurakin, and Nicolas Papernot. High accuracy and high fidelity extraction of neural networks. In Proceedings of the 29th USENIX Security Symposium (USENIX Security 20), pages 1345–1362, 2020.

- [JSMA19] Mika Juuti, Sebastian Szyller, Samuel Marchal, and N Asokan. Prada: protecting against DNN model stealing attacks. In 2019 IEEE European Symposium on Security and Privacy (EuroS&P), pages 512–527. IEEE, 2019.

- [Kar23] Ari Karchmer. Theoretical limits of provable security against model extraction by efficient observational defenses. In 2023 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML), pages 605–621. IEEE, 2023.

- [KM93] Eyal Kushilevitz and Yishay Mansour. Learning decision trees using the Fourier spectrum. SIAM Journal on Computing, 22(6):1331–1348, 1993.

- [KMAM18] Manish Kesarwani, Bhaskar Mukhoty, Vijay Arya, and Sameep Mehta. Model extraction warning in MLaaS paradigm. In Proceedings of the 34th Annual Computer Security Applications Conference, pages 371–380, 2018.

- [KST09] Adam Tauman Kalai, Alex Samorodnitsky, and Shang-Hua Teng. Learning and smoothed analysis. In 2009 50th Annual IEEE Symposium on Foundations of Computer Science, pages 395–404. IEEE, 2009.

- [Nan21] Mikito Nanashima. A theory of heuristic learnability. In Conference on Learning Theory, pages 3483–3525. PMLR, 2021.

- [O'D14] Ryan O'Donnell. Analysis of Boolean functions. Cambridge University Press, 2014.

- [PGKS21] Soham Pal, Yash Gupta, Aditya Kanade, and Shirish Shevade. Stateful detection of model extraction attacks. arXiv preprint arXiv:2107.05166, 2021.

- [Pie12] Krzysztof Pietrzak. Cryptography from learning parity with noise. In International Conference on Current Trends in Theory and Practice of Computer Science, pages 99–114. Springer, 2012.

- [PMG+17] Nicolas Papernot, Patrick McDaniel, Ian Goodfellow, Somesh Jha, Z Berkay Celik, and Ananthram Swami. Practical black-box attacks against machine learning. In Proceedings of the 2017 ACM on Asia Conference on Computer and Communications Security, pages 506–519, 2017.

- [RWT+18] M Sadegh Riazi, Christian Weinert, Oleksandr Tkachenko, Ebrahim M Songhori, Thomas Schneider, and Farinaz Koushanfar. Chameleon: A hybrid secure computation framework for machine learning applications. In Proceedings of the 2018 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML), pages 707–721, 2018.

- [TZJ+16] Florian Tramer, Fan Zhang, Ari Juels, Michael K Reiter, and Thomas Ristenpart. Stealing machine learning models via prediction APIs. In 25th USENIX Security Symposium (USENIX Security 16), pages 601–618, 2016.

- [Vai21] Vinod Vaikuntanathan. Secure computation and PPML: Progress and challenges. Video, 2021.

- [YZ16] Yu Yu and Jiang Zhang. Cryptography with auxiliary input and trapdoor from constant-noise LPN. In Annual International Cryptology Conference, pages 214–243. Springer, 2016.